A Little Background

There are different schools of thought when it comes to defining parametric design. By schools of thought, I mean ferocious armies fighting to death on a desolate battlefield. At this point, the only safe move in attempting a definition would be to go for the most conceptual one. Fortunately, it might also be a good vantage point for developing a healthy discussion on the topic.

Greg Lynn provides a satisfying answer in that regard: The processional model of the subject as either the animating force or as the occupant of privileged points of view assumes that architecture is a static frame which intersects motion. Lynn veers away from architecture as the cult of verticality, describing instead unfolding structures of movement and temporal flow. [1]

I like this definition, because it both precedes parametricism as a generalized practice, and at the same time affirms that architectural design had always been inherently parametric in nature, a nature that emerging CAD tools merely unearthed. Just like a four-dimensional cube is traditionally represented using a series of 3D projections (the Tesseract), Lynn formulates architectural iterations as mere slices of higher-dimensional spaces.

However, if architecture and design study inherently parametric forms, how does one define parametric design? Isn’t all design parametric? By contrast with traditional design, where shapes and structures are pulled out of the dark from higher-dimensional spaces, we could say that parametric design is the effective attempt to give a structure to those spaces, a temporal flow, in order to facilitate their exploration.

A State Of Confusion

When parametricism left the Eden-esque garden of conceptual ideas and pure mathematics, it felt very much like sharing a forbidden fruit pie. Entities having widely different meanings in the context of mathematical problems were aggregated under the name of “parameters” when the concept made its way to design practice.

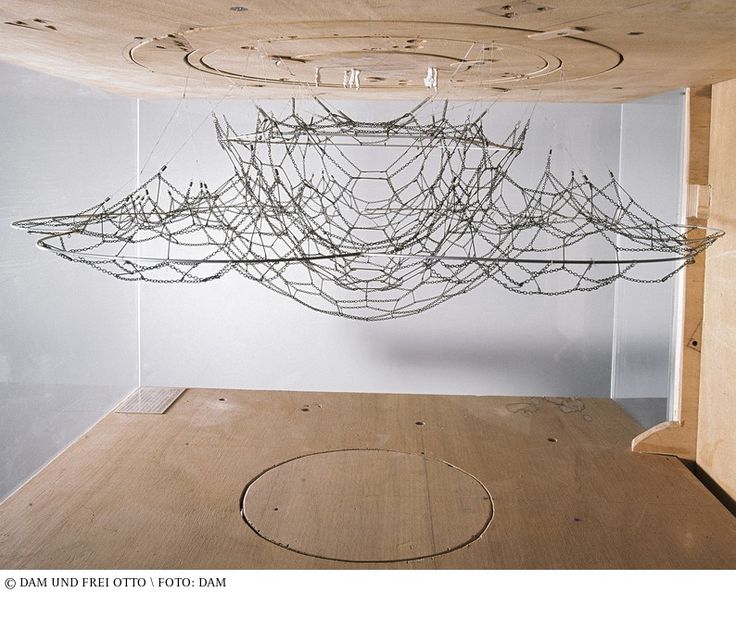

Take Frei Otto’s seminal experiments with catenary curves or even Gaudi’s upside-down model for the Church of Colonia Guell. In both cases, gravity and a mirror make up a primitive analogue parametric model. The designer has direct control over a few parameters, most importantly the length of the strings and their anchoring locations in the roof or the adjacent strings. However, in Gaudi’s example, something as crucial as the height of the different arches is not a parameter. In this particular instance, the analogue nature of the process makes it easy to play with the length of the strings to achieve the desired height ; in most parametric systems, the task is far more complicated. Theoretically, it is possible to find the length of the arch from its height, but it means solving complex differential systems of equations. It is even worse for visual parametric languages, where the accumulation of nodes and their articulation replaces the mathematical function with a black box impossible to invert.

Too often, two fundamental properties of parametric systems in mathematics are neglected or even forgotten: the independence of parameters and their explicit influence on the system. Models that were meant to be built as functional, flexible systems have become so intricate that they can’t be properly explored anymore.

The need to control numerical and visual quantities resulting from complex definitions has given rise to the use of genetic and evolutionary solvers (e.g Galapagos). Such solvers are great in desperate situations, when problems are so complex that no inherent structure could be uncovered. In all other cases, evolutionary solvers are but a mere capitulation, when one is resigned to forfeit any structure and have a shot in the dark to try and reach for the desired solution.

Of course, from a practical point of view, an ideal parametric definition embeds every possible measurable quantity as a parameter. There are obvious practical benefits to this goal, but it comes at the expense of the basic coherence and continuity of the design space. In mathematical terms, it is equivalent to losing its manifold properties.

Exploring The Design Space

Patrik Schumacher breaks down the design process into two fundamental sub-processes: the generation of alternative solution candidates and the selection of an alternative according to test results on the basis of posited evaluation/selection criteria. The overall rationality and effectiveness of a design process depends thus on two principally independent factors: its power to generate and its power to test/select. [2]

We have discussed how the latter (power to test/select) is sometimes undermined by practical decisions. What about the generation of alternative solution candidates?

Surely this is not about exploring physical dimensions, or constraints-based parameters. By definition, any resulting piece of design needs to satisfy those criteria, and being able to fit them is more of an engineering problem than a design one. Remain the other parameters, which could be called subjective. This family of subjective parameters most closely match the semantics of mathematics, in the sense that the parameters of a problem are inputs that continuously generate a space of solutions. Since science leaves little room for subjectivity, they are either fixed empirically (the infamous “magic numbers”) or fed to an optimization process (see below).

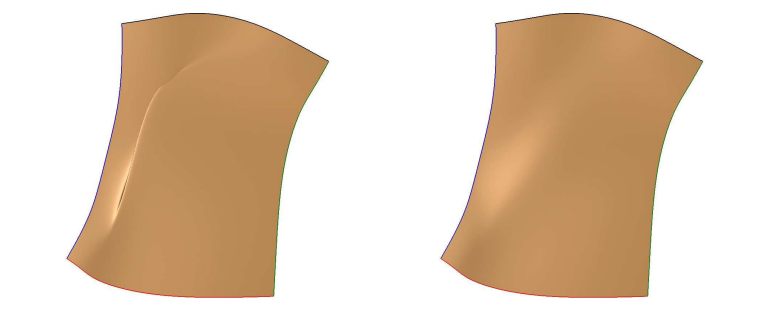

Left: surface patch bounded by constrained lines of curvature with random parameters.

Right: same surface patch after minimization of a fitness function [3]

I believe this is what Schumacher means by “the power to generate”. Generate a space of solutions when every hard requirement is fulfilled. Those parameters are sometimes embarrassing in science, but in terms of parametric design, it is crucial they remain at the heart of the designer’s process. They are keeping the process organic.

It is also crucial that this space of parameters be structured and easily explorable. Parameters have to be defined with an intent, from the start. On the scale of architectural projects, the space is too vast to explore, and mathematical optimization is often used to reduce its dimension. However it is not sufficient, as Daniel Davis remarks: One of the problems you have with optimization is that not everything is captured by the fitness function. You see this a lot in the work in the 1960s. At that time there was a lot of interest in optimizing floor plates and lots of that work failed. They were trying to work out the optimal walking distance between rooms. The algorithm failed because it couldn’t encapsulate the entire design space. They could find the optimum layout for walking but that wasn’t necessarily important in terms of architecture, or it wasn’t the only important factor in successful architecture. [4]

A collaborative workflow will always see the need for a structured design space that one can naturally explore, even on a big scale. But it is maybe at a smaller scale, when a parametric model is not necessarily a complex articulation of different design paradigms, that the need for parameters carrying a design intent is most relevant. An intimate piece of design can be a self-contained entity, an object in more than three dimensions, where parameters become dimensions as crucial as the spatial ones. Then, exposing a parameter is not only about achieving omnipotent control. It is also creating an organic link between a person and an evolutive piece of design.

[1] Greg Lynn, Animate Form , 1999

[2] Patrick Schumacher, Design parameters to parametric design , 2014

[3] Luc Biard et al., Construction of rational surface patches bounded by lines of curvature , 2009

[4] Daniel Davis, A history of parametric , 2013

/f/92524/1423x870/2bee1bb7dc/1.webp)